HyperCard (HC) was a brilliant program that came free with every Macintosh computer from 1987 and was in development until around 2004. It made it possible to create multimedia ‘stacks’ (of cards) and was very popular with linguists. For example, Peter Ladefoged produced an IPA HyperCard stack and SIL had a stacks for drawing syntactic trees or for exploring the history of Indo-European (see their listing here). Texas and FreeText created by Mark Zimmerman allowed you to create quick indexes of very large text files (maybe even into the megabytes! Remember this is the early 1990s). I used FreeText when I wrote Audiamus, a corpus exploration tool that let me link text and media and then cite the text/media in my research.

My favourite HC linguistic application was J.Randolph Valentine’s Rook that presented a speaker telling an Ojibwe story (with audio), with interlinear text linked to a grammar sketch of the language. I adapted that model for a story in Warnman, told by Waka Taylor, and produced as part of a set of HC stacks called ‘Australia’s languages’ and released in 1994. This model of linking media and text was new, computers at the time were unable to deal with large media files and the first monitors were monochrome so colour was not part of the presentation. The main problem was that HC was no longer produced or supported, so the many stacks that existed had to be converted to other formats. The surviving framework for HC is LiveCode and I still run Audiamus as a LiveCode stack to give me easy access to 23 hours of transcripts. But earlier HC stacks that were not converted at the time are not playable in LiveCode.

With the help of David Nash I discovered a Mac OS9 emulator called SheepShaver that runs in my current system, and, by finding an installer for HC and running it under emulation, I am now able to play HC stacks on my Mac. So the next step was to capture the stacks as video so others could see them without going through the fiddly business of emulation. Using QuickTime Players’ “New Screen Recording” function I could capture the screen, but audio was only captured from the input mic, not internally. To fix this I installed Soundflower that allows you to capture sound from internal sources. The problem then was that I could capture the sound, but I couldn’t hear it , as Soundflower diverts the sound to internal capture and not to the speakers. So I ran Audacity in a side window, with its input set to be Soundflower, and was able to see an image of the wave form while audio played. This allowed me to move the HC stack through its cards at about the right time as each piece of the story was completed. I’ve put the video of this stack on YouTube (see below) and have given the same treatment to the Australia’s languages stacks (also below) which include Ngaanyatjarra greetings by Lizzie Ellis who agreed to me putting them here again (after doing the original work some 25 years ago).

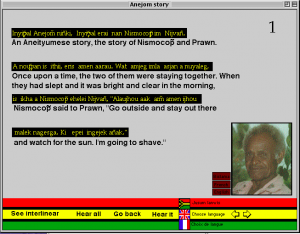

Another rescue mission was on an Aneityum story told by Philip Tepahae and recorded by John Lynch, also produced in HyperCard (in 1995). This one was extracted from the stack and time-aligned in Elan before being uploaded into EOPAS (which no longer works).

Follow

Follow

Revisiting the Anejom story that was in HyperCard, then in Eopas, both of which are no longer functioning. It had been recorded by the late John Lynch, with the late Philip Tepahae speaking. I had made an augmented reality version in which I made a video of the Eopas instance, with the relevant text being highlighted as it was played. This was the only surviving version of the audio and now I have learned my lesson and put it into PARADISEC (https://catalog.paradisec.org.au/collections/JL2/items/anejomstory).

Update: The files are in the internet archive, running under emulation here: https://archive.org/details/hypercard_australias-languages_2020-08-05. You need to be patient, the emulator takes a minute to load, then you need to doubleclick on the disk icon, then on the icon that says AustraliasLanguages. When it asks you for StartHere, just select the item presented to you.

The Warnman Story is available here: https://archive.org/details/hypercard_warnman-story.

The list of language names is available here: https://archive.org/details/hypercard_aboriginal-language-names.

A set of wordlists in different Aboriginal languages is here: https://archive.org/details/hypercard_aiatsis-word-lists.